Introduction: The Digital-Age Legal Research Paradigm

The integration of Artificial Intelligence (AI) is a strategic shift, not a technological upgrade. We are moving from a traditional, labor-intensive profession into a “digital-age legal research paradigm.” AI has evolved from an instrumental function to a core operational necessity, serving as a catalyst for systemic change across judicial practices, regulatory policy, and the very structure of the justice system. This roadmap outlines a deliberate, phased approach to harnessing this transformation for sustained competitive advantage.

Historically, legal research has been defined by the manual perusal of documents—a time-consuming process susceptible to the inherent limitations of human cognition and error. This manual peril has been supplanted by the algorithmic precision of modern AI. Systems such as ROSS and LexisNexis utilize Natural Language Processing (NLP) to read, analyze, and extract information with extreme accuracy and speed. This transition from manual discovery to algorithmic analysis allows legal practitioners to move beyond routine data extraction and focus on higher-level strategic issues. The practice of law changes as a result—and so does the value delivered to clients.

A successful AI integration strategy is inherently interdisciplinary. To navigate the ethical and practical challenges of this new paradigm, effective implementation requires direct and continuous collaboration between the firm’s legal professionals, data scientists, and ethicists. This synergy is essential to ensure that the development and deployment of AI tools align with established legal norms and principles. By embedding this interdisciplinary approach into our strategy, we can confidently build systems that are not only powerful but also trustworthy and compliant.

The strategic advantages of embracing a data-driven legal paradigm are clear. Achieving these advantages, however, requires a deliberate choice of a reliable and legally sound technical architecture. The following section details the foundational technology strategy that will serve as the bedrock of AI initiatives.

1. Foundational Technology Strategy: Mandating “Courtroom-Grade” Reliability

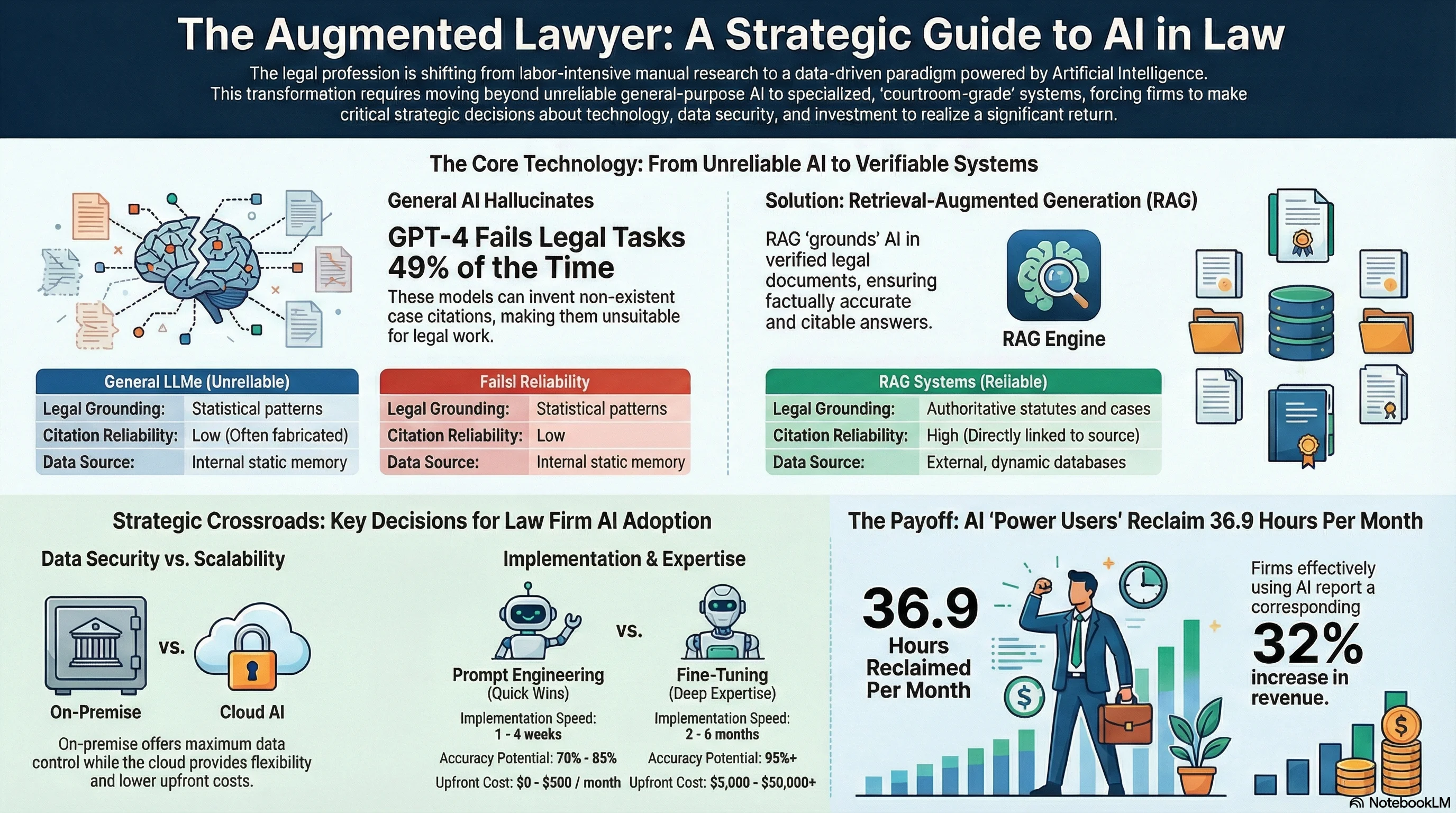

Key Insight: GPT-4 hallucinated in at least 49% of basic case summary tasks. For a profession where accuracy is paramount, ungrounded general-purpose models are a direct threat to work product integrity and client trust.

Choosing the correct underlying AI architecture matters more than most firms realize. The primary technical challenge for any legal AI application is mitigating the risk of “hallucinations”—the generation of factually incorrect or fabricated information, such as non-existent case citations. In a profession where accuracy is paramount, such errors are unacceptable. Therefore, a foundational technology strategy must be built on a principle of “courtroom-grade” reliability.

General-purpose Large Language Models (LLMs) that rely solely on parametric memory—where knowledge is embedded within the model’s static parameters—are fundamentally unsuitable for authoritative legal work. These models cannot reliably cite specific sources and are prone to fabricating information. A 2024 research finding underscores this risk, revealing that GPT-4 hallucinated in at least 49% of basic case summary tasks. This high error rate renders such ungrounded models a direct threat to the integrity of work product and client trust.

To address the critical issue of hallucinations, firms should adopt Retrieval-Augmented Generation (RAG) as the core architectural standard. RAG is a technique that grounds the text generation capabilities of LLMs with an external, authoritative source of truth. This process ensures that all outputs are based on actual legal text rather than statistical probability. The RAG process involves three key phases:

Vectorization: Authoritative documents (statutes, cases, regulations) are transformed into a semantic index, or vector database.

Retrieval: When a query is made, the system identifies and retrieves the most relevant passages from this database.

Grounding: The retrieved documents are fed into the LLM’s context window, forcing the model to base its response on the provided, verifiable information.

| Feature | Parametric Memory (General LLMs) | Non-Parametric Memory (RAG) |

|---|---|---|

| Data Source | Internal weights (Static) | External databases (Dynamic) |

| Citation Reliability | Low (Often hallucinated) | High (Directly linked to source) |

| Update Frequency | Requires full retraining (Expensive) | Real-time vector updates (Efficient) |

| Legal Grounding | Statistical patterns | Authoritative statutes and cases |

| Accuracy (Case Summaries) | Max ~51% (49%+ Hallucination Rate) | Comparable to human work |

By mandating RAG as the foundational architecture, firms ensure a baseline of factual accuracy and reliability. This is the single most important architectural decision a firm will make—everything else builds on it.

2. Implementation Pathways: A Decision Framework for Customization

Key Insight: Most firms won’t fine-tune models—they’ll use vendor platforms or RAG with API-based LLMs. The real decision is how to layer prompt engineering on top of a grounded knowledge base.

Having established a reliable RAG architecture, the next strategic decision involves choosing between implementation pathways. The conventional framing presents this as a choice between prompt engineering and model fine-tuning. But this binary is increasingly outdated.

Prompt engineering shapes AI behavior without altering the model’s parameters. It controls output format, tone, and refusal patterns. For legal work, prompt engineering defines how the model uses retrieved context—ensuring outputs match firm style, include appropriate caveats, and refuse to answer when confidence is low.

Fine-tuning adapts the model’s internal parameters to domain-specific patterns. It requires substantial labeled data, ML expertise, and significant investment. Most legal firms will never fine-tune a model. They’ll either use vendor platforms like CoCounsel or Harvey (which have already done the specialization work) or run RAG against API-based models like Claude or GPT-4.

RAG does the heavy lifting. The firm’s statutes, cases, and precedents become the knowledge base. This is where legal specificity lives—not in fine-tuned model weights, but in the retrieval layer that grounds every response in authoritative sources.

The strategic recommendation: invest in RAG configuration before considering fine-tuning. Most firms achieve their accuracy requirements through better retrieval, legal-aware document chunking, and thoughtful prompt design. Fine-tuning becomes relevant only when these foundations are solid and specific accuracy requirements still aren’t met.

The implementation details—data requirements, cost structures, and configuration decisions—vary significantly by firm size and practice area. The next section addresses where this processing should happen, a decision driven by compliance requirements rather than technical preference.

3. Infrastructure and Data Sovereignty Framework

Key Insight: The U.S. CLOUD Act allows authorities to compel U.S.-based cloud providers to surrender data regardless of its physical storage location, creating potential conflicts with data protection obligations to international clients.

In the legal sector, data sovereignty and client confidentiality are paramount. The choice of hosting environment for AI models—on-premises versus cloud—is therefore a primary strategic concern. This decision involves a critical trade-off between the absolute control offered by a “secure fortress” and the elastic convenience of the cloud. The framework must balance these priorities to meet security obligations without sacrificing scalability.

The on-premise hosting model involves running AI systems entirely on the firm’s own hardware. This architecture provides the highest possible level of data sovereignty, as sensitive client information never traverses an external network. It minimizes breach risks and ensures compliance with data protection regulations like GDPR or HIPAA. However, this approach requires significant capital expenditure for specialized GPU hardware and the recruitment of in-house MLOps talent to manage the infrastructure. For most firms outside the AmLaw 100, the capital requirements make pure on-premise impractical.

Cloud platforms offer near-infinite scalability, rapid deployment, and an operational expenditure model that avoids hardware obsolescence risk. While convenient, this model requires data to be processed externally, introducing risks related to data egress and the extraterritorial reach of foreign laws. For instance, the U.S. CLOUD Act allows authorities to compel U.S.-based cloud providers to surrender data regardless of its physical storage location, creating potential conflicts with data protection obligations to international clients.

A critical security risk in AI systems, even in enterprise cloud instances, is “matter-blindness.” Unlike a Document Management System (DMS), many AI tools do not inherit granular permissions or respect “Ethical Walls,” creating the risk of data cross-pollination where insights from one client’s matter could inadvertently inform suggestions for another. To mitigate this, it is a strategic mandate that all AI implementations must include technical guardrails that automatically encrypt sensitive text or anonymize client-identifiable data before it is processed by any model, whether hosted on-premise or in the cloud. This protocol is essential to upholding the duty of confidentiality.

Therefore, the infrastructure framework should be hybrid by design. All matters involving high-stakes litigation, M&A due diligence, or client data subject to GDPR will default to the on-premise “secure fortress.” General legal research and business automation tasks with anonymized data may leverage the “elastic convenience” of approved cloud vendors, subject to rigorous security review.

4. Competitive Landscape and Platform Selection Strategy

Key Insight: Prioritizing tools with native DMS integration like iManage’s Model Context Protocol (MCP) goes beyond workflow preference—it directly implements of RAG and data sovereignty strategy.

Specialized platforms and established content providers now dominate the legal AI market, integrating AI capabilities directly into their core ecosystems. The era of standalone gadgets is over. A platform selection strategy must therefore focus on tools that plug directly into existing workflows, maintain data governance, and deliver clear, practice-area-specific value.

To minimize friction and maintain governance, firms should prioritize AI tools that are embedded directly within existing DMS platforms. Prioritizing tools with native DMS integration like iManage’s Model Context Protocol (MCP) goes beyond workflow preference—it directly implements of RAG and data sovereignty strategy, ensuring the grounded, authoritative data source (the DMS) remains secure and is never exposed to “matter-blind” systems. iManage utilizes an MCP to provide a standardized, secure connection for AI tools to access repository content while preserving all existing security and permission rules. NetDocuments’ ndMAX suite brings generative AI directly to the content, offering a low-code “Legal AI App Builder” that allows teams to create custom workflows.

Beyond the core DMS, a number of specialized legal AI assistants offer powerful, targeted capabilities:

| Tool | Focus Area | Key Strategic Capability |

|---|---|---|

| iManage AI | Knowledge Management | MCP-based secure orchestration of content |

| NetDocuments ndMAX | Workflow Automation | Low-code custom legal app building |

| CoCounsel (TR) | Litigation & Research | Grounded research with Westlaw citations |

| Lexis+ AI | Research & Analytics | AI-driven insights with predictive outcomes |

| Harvey | Enterprise Assistant | Customizable workflows for large firm panels |

| Spellbook | Transactional Law | Native MS Word contract drafting and redlining |

This landscape analysis reveals a clear bifurcation: foundational platforms (iManage, NetDocuments) that secure data, and specialized assistants (CoCounsel, Harvey) that leverage it. The strategy should be to fortify the foundation first, then deploy assistants whose capabilities are directly aligned with highest-value practice areas.

AI adoption is not uniform across the legal profession. To maximize return on investment and ensure successful adoption, initial rollout should be targeted at practice areas demonstrating the highest utility for AI tools. Based on current market data, these areas are Immigration Law (47% adoption) with key use cases including document processing and form automation, Personal Injury (37% adoption) with key use cases including medical record summarization and client intake automation, and Civil Litigation (36% adoption) with key use cases including e-discovery review and legal research.

5. Measuring ROI and Driving Firm-Wide Adoption

Key Insight: Industry-wide, 93% of firms report that AI has reduced time spent on non-billable work, and 80% state they can deliver work faster to clients.

Quantifying Return on Investment (ROI) will determine program success. Firms must move beyond vanity metrics to measure both tangible efficiency gains and the strategic value of risk mitigation and enhanced client trust. The goal is not simply to purchase technology, but to build an integrated business capability that fundamentally enhances how legal services are delivered.

Performance should be benchmarked against industry KPIs:

- Time savings: 36.9 hours saved per month for power users, with a 50% drop in document analysis time

- Revenue impact: 32% increase for teams that effectively integrate AI into workflows

- Cost reduction: 35% decrease in service delivery costs through automation

- Throughput: 66% higher output for AI-enabled professionals compared to non-enabled peers

Beyond the raw numbers, AI delivers strategic value that strengthens competitive position. Industry-wide, 93% of firms report that AI has reduced time spent on non-billable work, and 80% state they can deliver work faster to clients. Furthermore, tools that can instantly verify factual assertions and citations improve credibility with the court and tangibly reduce the risk of malpractice.

To ensure these returns are realized, adopt proven strategies from the industry’s highest-performing firms. The adoption plan rests on three pillars:

Institute A/B Testing. Mandate parallel teams on similar matters—one using traditional methods, the other a new AI workflow. This allows rigorous quantification of time savings and identifies the most valuable automation opportunities.

Dedicate learning time. Following firms like Ropes & Gray, allocate a percentage of billable hours (up to 20% for first-year associates) for professional development focused on AI fluency. This signals that mastering these tools is a core professional skill.

Focus on outcome-based redesign. Go beyond simply automating existing tasks. Redesign legal processes from the client’s perspective to deliver the final work product more effectively.

6. Governance, Risk, and Compliance (GRC) Framework

Key Insight: Under ABA Rule 5.3, an AI tool is considered a “nonlawyer assistant,” making supervising lawyers ultimately responsible for the final work product it generates. Pasting client data into consumer-grade AI tools without proper technical guardrails could be argued as a waiver of attorney-client privilege.

AI use faces significant and increasing legal and ethical scrutiny. Innovation must be guided by a framework that ensures compliance with emerging regulations, upholds professional obligations, and protects clients. This section establishes the formal Governance, Risk, and Compliance (GRC) framework that will govern all AI initiatives.

The EU AI Act has a significant extraterritorial impact, applying to any business offering AI-based services within the EU, regardless of where the business is located. Under this regulation, AI systems intended for use in legal research and analysis are classified as “high-risk” under Annex III. As a “deployer” of these systems, firms are obligated to perform a comprehensive Fundamental Rights Impact Assessment before deploying any high-risk AI system. This assessment must detail how the system will be overseen by humans to mitigate risks.

The American Bar Association (ABA) has provided clear guidance on the ethical use of AI, which should be formally adopted into firm policies. All legal professionals must adhere to three principles:

Competence (Rule 1.1). Lawyers must remain current on the benefits and risks associated with relevant technology, including AI.

Confidentiality (Rule 1.6). Lawyers must take all reasonable measures to prevent unauthorized access or disclosure of client information when using AI tools.

Supervision (Rule 5.3). An AI tool is considered a “nonlawyer assistant,” making supervising lawyers ultimately responsible for the final work product it generates.

A critical component of this framework is a strict prohibition on using consumer-grade AI systems for client work. Pasting client data into such tools without proper technical guardrails could be argued as a waiver of attorney-client privilege.

This GRC framework provides the necessary guardrails to ensure the use of AI is responsible, ethical, and compliant. With these protections in place, firms can look ahead to the future, preparing for the next evolution of legal technology and talent.

7. The Future Trajectory: Cultivating the Augmented Law Firm

Key Insight: LexisNexis has stated a goal for its AI to automate 15-20% of lawyer tasks by 2028. Projections indicate that by 2026-2028, agentic systems will be capable of handling end-to-end business use cases.

Legal technology is moving beyond simple information retrieval toward autonomous legal agents. Projections indicate that by 2026-2028, these agentic systems will be capable of handling end-to-end business use cases. This final section is a strategic plan to future-proof the workforce, service delivery models, and competitive standing in anticipation of this next wave.

AI will not replace lawyers; it will augment them. Freed from repetitive tasks, lawyers can focus on the uniquely human aspects of practice: judgment, creativity, empathy, and advocacy. To support this evolution, firms must cultivate new hybrid roles:

Legal Knowledge Engineers specialize in structuring the firm’s legal information for machine consumption.

Legal Process Designers reimagine end-to-end delivery of legal services, using AI to create more efficient and client-centric workflows.

AI Ethics Counsel govern the use of automated legal systems, ensuring they remain compliant, fair, and aligned with firm values.

To provide a concrete target for long-term ambitions, development should align with industry benchmarks. LexisNexis has stated a goal for its AI to automate 15-20% of lawyer tasks by 2028. This should be adopted as a benchmark for internal targets for adopting and developing agentic AI capabilities, ensuring firms remain at the forefront of the industry.

The Legal AI Strategy Playbook: Visual Summary

Conclusion

The legal profession’s relationship with AI has moved past experimentation. The architecture decisions made today—RAG versus ungrounded generation, on-premise versus cloud, integrated platforms versus standalone tools—will determine which firms can deliver reliable AI-assisted work product and which will face malpractice exposure from systems they don’t understand.

Three principles should guide every implementation decision. First, ground everything. RAG architecture isn’t a technical preference; it’s the minimum standard for work product you can defend. Second, let compliance drive architecture. Data sovereignty requirements, ethical wall obligations, and ABA rules aren’t obstacles to AI adoption—they’re the specification. Third, redesign workflows, don’t just add tools. The 93% of firms reporting reduced non-billable time didn’t bolt AI onto existing processes; they rebuilt processes around AI capabilities.

The competitive window is narrowing. Early movers are already capturing efficiency gains and building institutional knowledge. Firms that wait for “proven” solutions will find themselves licensing capabilities from competitors who built them.

The roadmap is clear. The question is execution.

See It In Practice

Strategy without implementation is just theory. The gap between “mandate RAG architecture” and a working system involves hundreds of configuration decisions: How should legal documents be chunked? What hybrid search weighting works for contract language? How do you measure whether the system is actually reliable?

To demonstrate how these principles translate to working systems, I’m building a contract review tool that implements legal-specific RAG configuration, citation verification, and reliability measurement.

See the Contract Review Prototype →